This report presents findings from a large-scale national survey of Norwegian board members conducted in early 2026.

It examines how board members adopt, use, and govern artificial intelligence in their board work — and what implications this holds for governance, competence, and institutional readiness.

The survey comprised 33 questions across seven thematic modules and was distributed through Orgbrain, Styreforeningen, Styreinstituttet, CORPRT, ConnectVest, and LinkedIn board member communities.

Download the full report

Key Takeaways

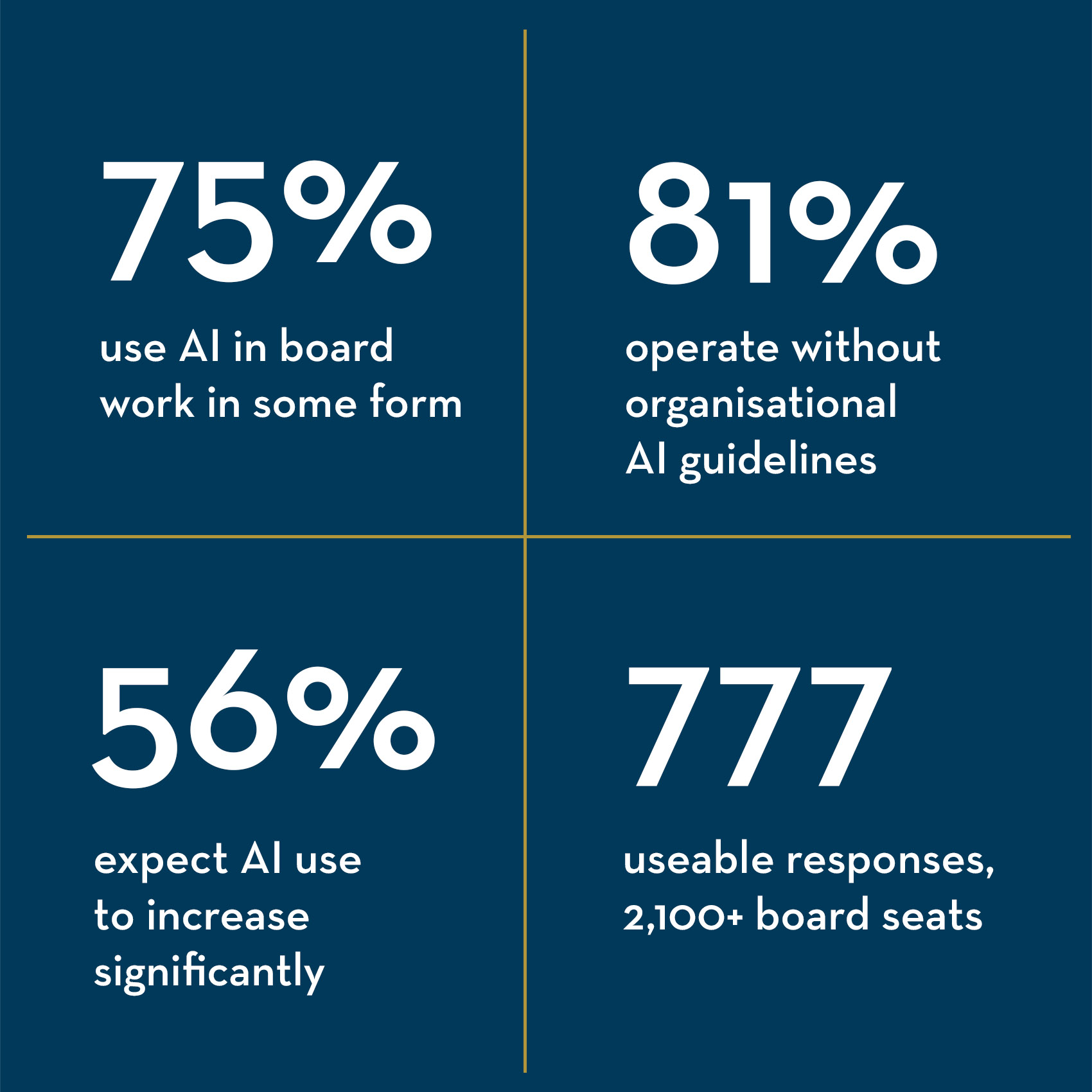

AI is already in the room. Three in four board members use AI in some form in their board work, and the overwhelming majority who do expect to use more of it. Adoption is not approaching — it has arrived.

Governance is lagging. More than four in five board members operate without formal organisational AI guidelines. Most boards have not discussed the topic, and board members are routinely sharing strategic and financial data with AI tools under no organisational framework.

The decision barrier holds. Across all archetypes, all board types, and all levels of training, comfort with AI as a decision-maker is low and convergent. This reflects a principled position about human judgment in governance — not a knowledge gap that training can close.

Use concentrates in administration. AI delivers clearest value in preparation and routine task reduction. Use in analytically demanding areas — risk management, strategic planning — remains substantially less common and returns less perceived benefit.

Governance comes first. Board members prioritise secure and approved tools and clear guidelines above training, legal guidance, or practical examples. The primary demand is for institutional legitimacy — not more capability.

A widening divergence. Power Users and Professionals expect significant acceleration in AI use. Efficiency Users are consolidating around a stable, narrow role. The gap between the most and least AI-active board members is likely to grow.

Key findings

-

Ai adoption and usage

Ai adoption and usage

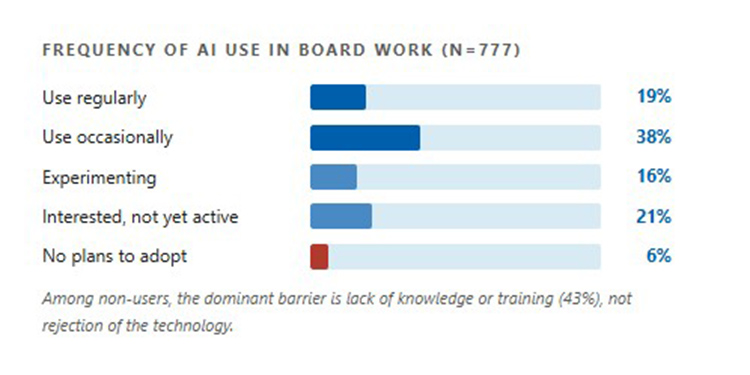

Three in four board members now use AI

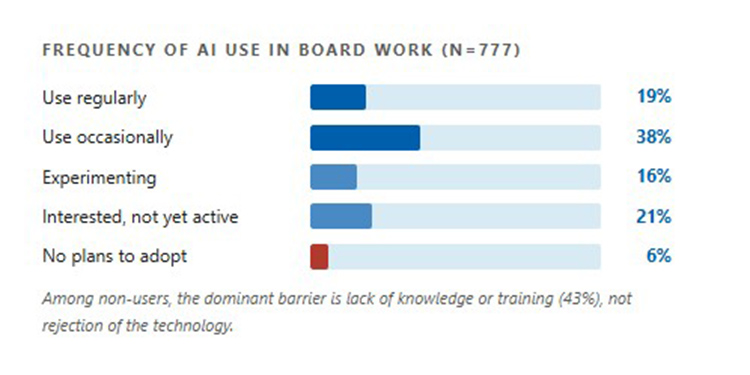

75 percent of respondents engage with AI tools in some form in their board work — ranging from daily integrated practice to cautious experimentation. A further 21 percent describe themselves as interested but not yet active, and only 6 percent have no plans to adopt. Adoption is not approaching — it has arrived.

Among active users, regular use (weekly or more) stands at 19 percent. The largest single group — 38 percent — uses AI occasionally, suggesting broad but shallow engagement across the sample.

-

Areas of use

Areas of use

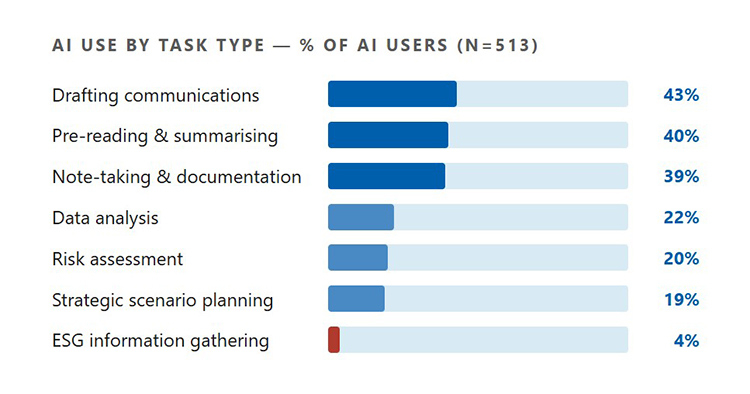

Administration dominates; analytical use lags

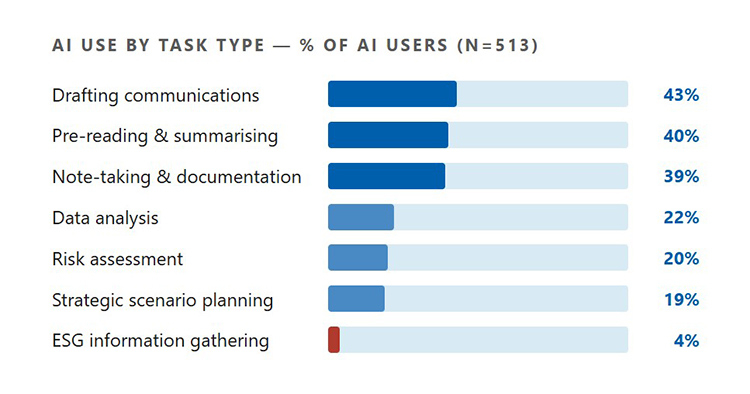

The most frequently reported applications are drafting communications (43%), pre-reading and summarising board materials (40%), and note-taking and documentation (39%). These are bounded, repetitive tasks — the kind where AI can assist without requiring deep judgment.

More analytical uses are present but far less common. Data analysis (22%), risk assessment (20%), and strategic scenario planning (19%) rank well below the administrative tasks. ESG information gathering ranks last at 4 percent.

-

AI tools and platforms

AI tools and platforms

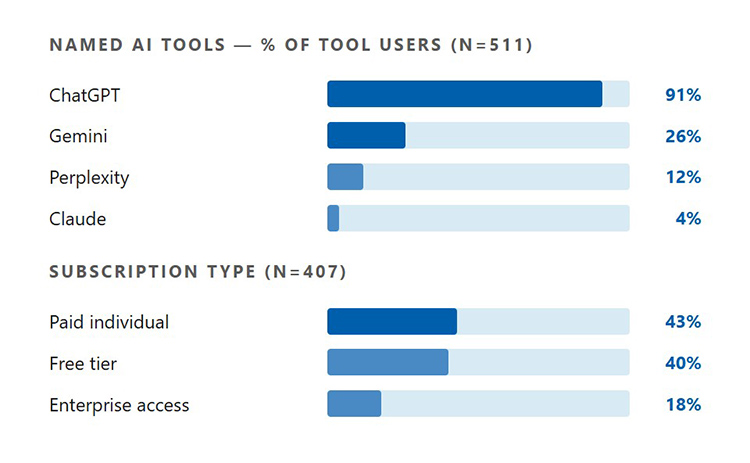

ChatGPT dominates; governance implications are significant

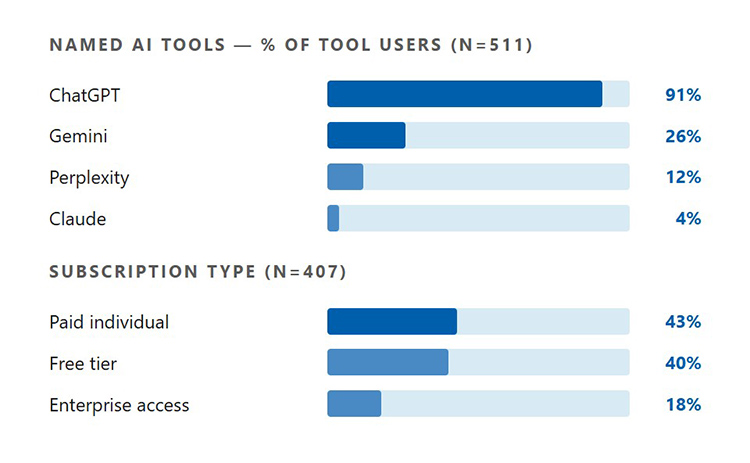

Publicly available general-purpose AI services are by far the most used category (82%). ChatGPT is named by 91 percent of those specifying a tool — more than three times the share citing any other product. Board-specific software (29%) and enterprise solutions (30%) are secondary.

The subscription picture has governance significance: 43 percent pay for individual accounts and 40 percent use free tiers. These are precisely the tool categories least likely to be subject to organisational procurement review or data processing agreements.

-

Data Sharing Practices

Data Sharing Practices

Sensitive data flows without governance frameworks

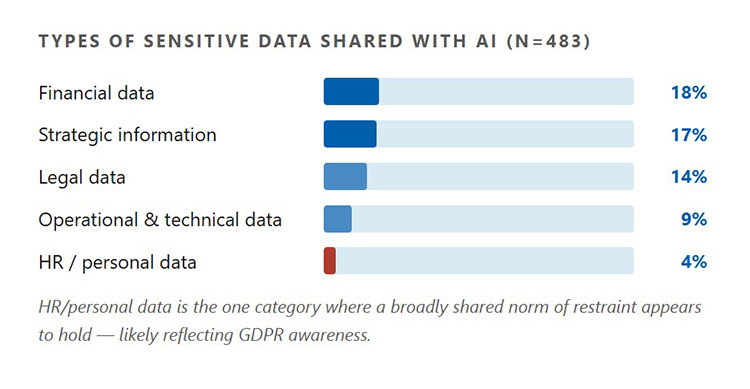

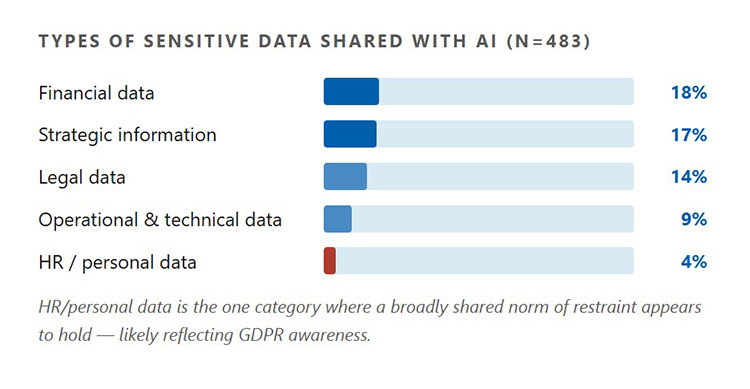

Sharing sensitive information with AI tools is less common than AI use itself, but it is far from rare. Among the 483 respondents who answered this question, meaningful shares report actively feeding board-sensitive material into AI systems — and the categories involved are not trivial.

Board members are routinely entering financial, strategic, and legal information into AI systems without any organisational framework governing what can be shared, with which tools, or under what conditions. The governance gap is not hypothetical.

-

Perceived benefits

Perceived benefits

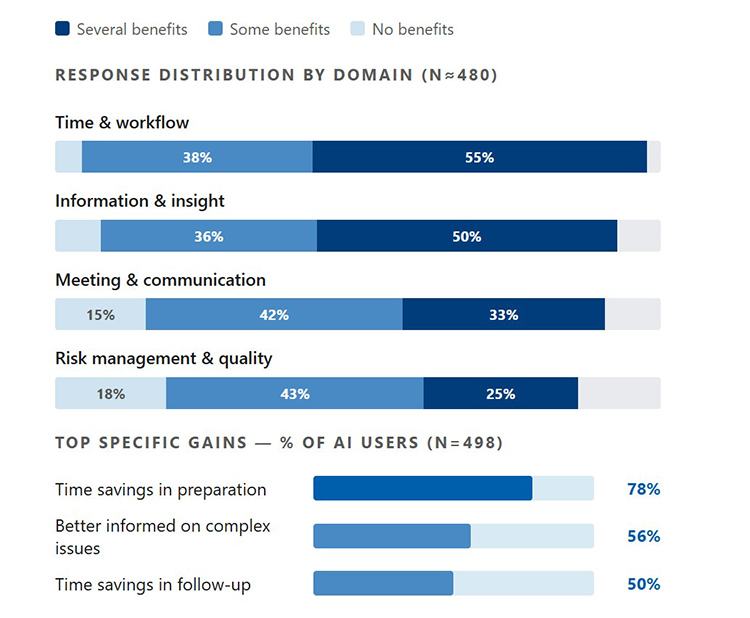

Strongest in preparation; weakest in high-stakes work

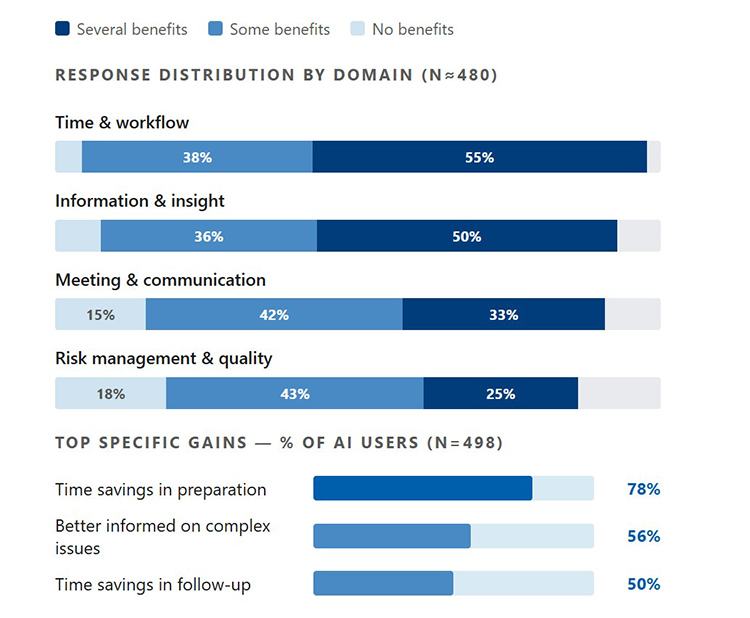

AI delivers clearest value in time and workflow efficiency. 78 percent of users report time savings in preparation as a concrete gain. Perceived benefit weakens as tasks become more demanding, falling to its lowest in risk management.

Even current users believe significant untapped potential remains. 79 percent see significant or moderate additional value — suggesting the ceiling of perceived benefit is well above current experience.

-

Governance & Guildelines

Governance & Guildelines

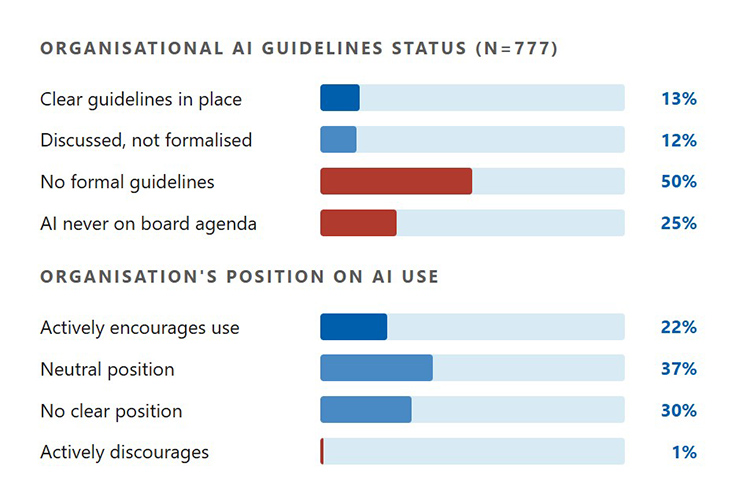

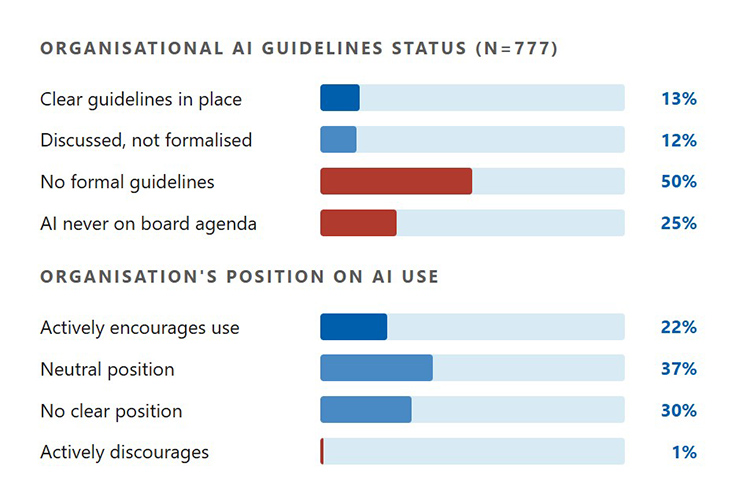

Governance is lagging far behind use

More than four in five board members operate without formal organisational AI guidelines. Most boards have not discussed the topic. Board members are routinely sharing strategic and financial data with AI tools under no organisational framework.

Where guidelines do exist, compliance is high — 93 percent of those with guidelines report staying within them. But this reassuring figure applies to only the small minority who have guidelines in the first place. The governance gap is structural, not behavioural.

-

Concerns

Concerns

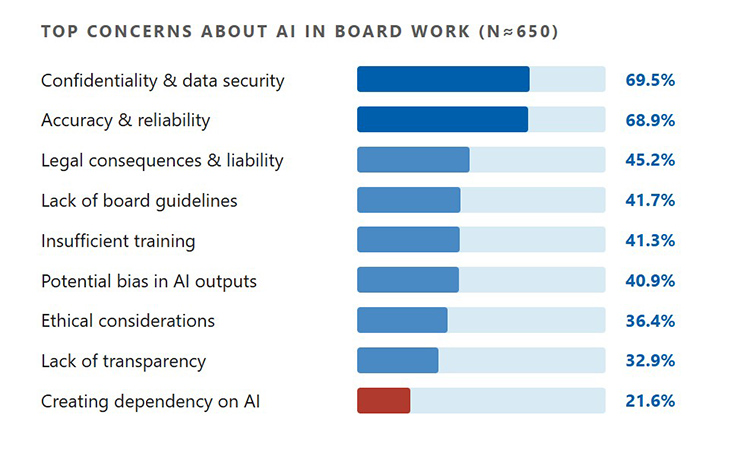

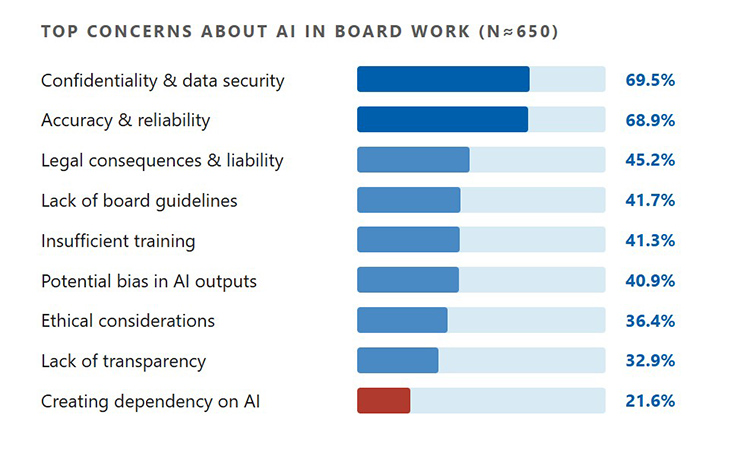

Confidentiality and reliability are the dominant worries

Concerns about confidentiality and data security (69.5%) and accuracy and reliability (68.9%) dominate — these are not abstract worries but direct reflections of the data sharing and decision-support use cases that board members already engage in.

Legal consequences (45.2%) and lack of board guidelines (41.7%) round out the top concerns. Notably, the demand for governance structure is not about technical knowledge — board members prioritise clear guidelines above additional training.

-

Five board types

Five board types

Five distinct AI maturity profiles

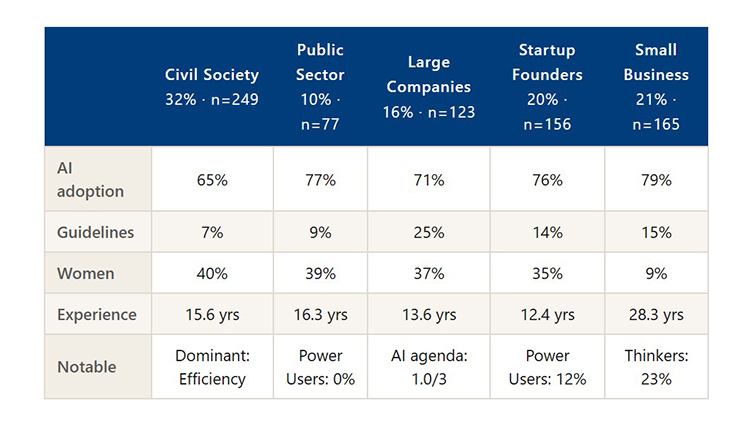

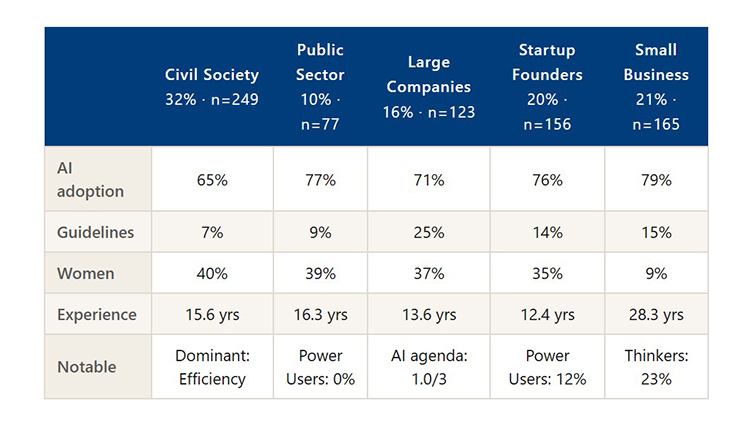

Grouping respondents by organisational context reveals five board types, each with a characteristic AI maturity profile. Civil society boards lag on adoption and have almost no formal governance, yet are the most gender-balanced. Large company boards are the only type where guidelines are present in a meaningful share. Startup founder boards stand out for their Power User concentration. Public sector boards are the only type without Power Users. Across all five types, Professionals form the largest single archetype.

-

Four user archetypes

Four user archetypes

Four distinct patterns of AI engagement

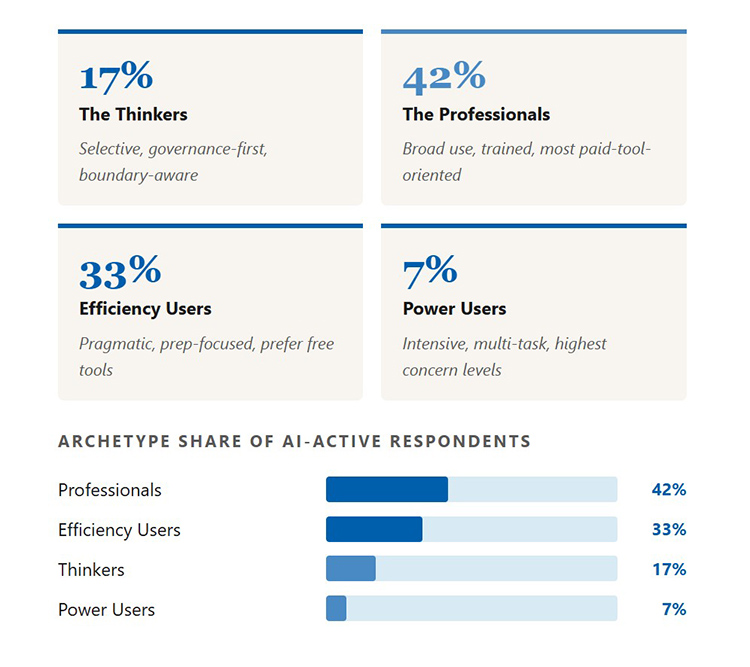

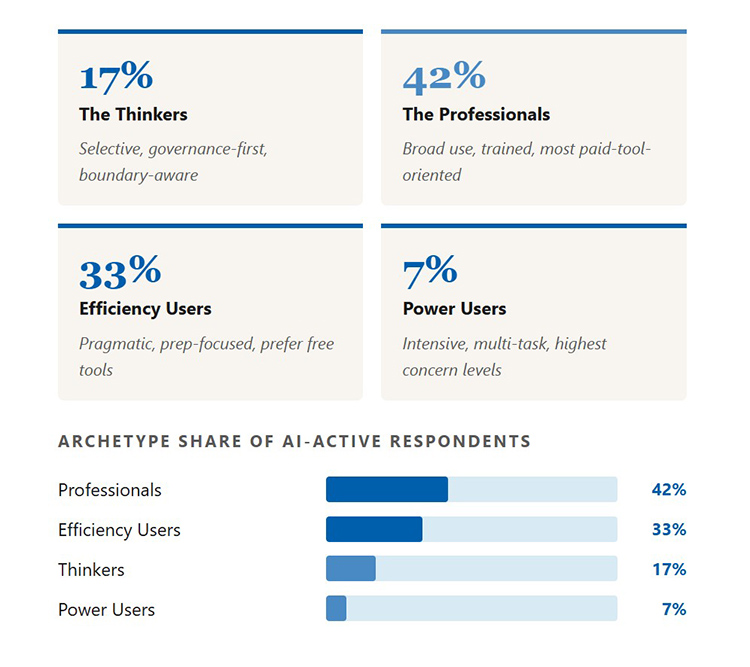

Clustering respondents by behaviour, attitude, and governance stance yields four archetypes. Professionals (42%) are the modal pattern — broad, trained, and paid-tool-oriented. Efficiency Users (33%) are the most pragmatic, focused narrowly on meeting preparation. Thinkers (17%) are selective and governance-conscious. Power Users (7%) are intensive, younger, and the only group that regularly notices irresponsible AI use among peers.

Across all archetypes and all board types, comfort with AI as a decision-maker is low and convergent — a principled position about human judgment in governance, not a knowledge gap training can close.